Backups are essential for everyone’s important data. Companies need to backup their documents and forms; consumers need to backup their photos. It honestly doesn’t matter what, but the principle is still the same: Everyone needs backups.

Why?

The purpose of a backup is to get your data or operating environment back up and running if when something goes wrong. To decide on how, you need to decide what you want to defend against.

For example, consumers will want their precious photos backed up, and accessible when they want. They may choose an external hard drive, a server in their home, or a cloud-based solution like Dropbox or Google Photos. Their main concern is they want them accessible, with bad guys not accessing the data as a second measure.

Likewise, a company will wish for data to be available to recover from failure, or a malicious actor - a virus or a hacker - wreaking havoc. They should expect their recovery plan to include how to recover their environment in case of a catastrophe.

What?

Backups only work when all the rules are followed. Let’s discuss the most common rules, and how to mitigate them:

3-2-1 Backups

This common acronym describes how to ensure you are following the process correctly. It eans you need to have 3 copies of your data, on 2 types of media, and at least 1 kept off-site.

Three copies of your data does not mean to copy a folder onto the same hard drive, and call it a day. It does not mean to delete the original after you’ve taken a backup. You need to maintain the 3 copies, at minimum, on separate physical devices (not including RAID, but we’ll discuss that more)

The two different medias are to ensure that even if an interface goes obsolete, you can still access your backup. It’s not like Firewire Zip drives Super Disks Compact Flash 5 1/4″ Floppy Drives USB Drives will ever go out of style, right? If you can’t access the medium to read your data, then your backup is useless.

The off-site part is solved much easier these days with many cloud-based operations, as well as being able to create off-site servers. This rule is to ensure that even if you building blows up, floods, catches fire, get smashed, etc. that you can still obtain a copy of yoru data. Not every building is capable of surviving a nuclear holocaust.

RAID is not backup

While RAID is excellent for servers and some workstations, it is not a backup. RAID is used to offer redundant copies of your data (exception: RAID 0 and JBOD) across multiple drives to appear as one unit.

Backups need to be stored away from your active device. If a virus hits your main workstation or server, it should not spread to your backups (ideally). If you have RAID as your backup, then the virus got all your data anyways.

RAID is able to offer redundancy in case of a hardware failure, and should not be the only method of keeping your data available. Depending on the number of drives in the array depends on what is recommended, as well as the purpose of the array. For most home users, RAID 1 with two drives is acceptable. I personally run 4 drives in a RAID 10 for speed and redundancy, but I can still potentially lose all my data if the right two hard drives fail.

External Hard Drives are not infallible

I have heard many times from people that they copied their photos to their external hard drive - and then deleted them from their primary hard drive! This violates the 3-2-1 rule above, and ensures you only have one copy of your “important” data!

An external hard drive is typically a regular internal hard drive, with an enclosure to connect via USB, eSATA, or even Firewire! Parts can break, just like a regular drive. Luckily, depending on the failure, you can simply move the drive into another enclosure.

Even still, this is not a great method of backing up - how often are you backing up your important data? How are you keeping the drive in case of fire or flood? Are you ensuring you’re not shaking the drive vigorously while it’s reading/writing? Are you deleting the original after it’s backed up?

Cloud Services

Cloud services have become quite popular, such as with Dropbox or Google. The data is replicated across data centers automatically, without you even noticing! Your data seems safe, right?

With commercial-based products, you need to still be able to trust who has access to your data. Is the company likely to go out of business? Are you uploading data that has travel restrictions (i.e. can you legally transfer other people’s personal data across borders)? Who else can access the information, such as impersonating your user account, or by you leaving your laptop unlocked and logged in?

Other products exist that allow you to host your own cloud. I personally use NextCloud, as it’s open source and easy enough to setup. Its client apps allow me to automatically upload pictures from my phone as they’re taken, or other folders on my computer. The data is stored on a server I control, with limited access to others.

Whatever you choose, make sure it’s able to offer the features you need. If your business’s continuity plan relies on its data, then expect to pay for the quality you require.

When?

People ask this question of me a lot, and I always answer the same.

How much data or time are you willing to lose if something bad happens?

If people are willing to lose a week’s worth of work, then I recommend weekly backups. If they’re willing to lose a year’s worth of data, I smack them.

For businesses, I typically push for daily backups. Because we can set backups to be incremental, the backups are small enough to recover what’s needed, and when. It will use the most amount of space on the backup device, but gives the greatest time control of how a file or environment looked at a certain time.

How?

First off, make sure you understand what you are protecting against. Understand what you want to protect from that disaster. Then, we can move forward.

There are many, many ways to perform backups. For single-users, you can usually get away with copying the files to an external drive, and a cloud-type service. For large enterprises, they will need to be able to spin up servers with current data quickly.

If you are able to centralize your data onto a server, then we can focus on that piece of the puzzle only! This would scale well, and allows you to have lower-specced hardware for your client machines. On Linux, I would recommend the rbackup package to push (or pull) data to a remote server, also running Linux. If you are short on funds, you can buy a Raspberry Pi, and an external hard drive - then plug it in at a friend’s house that you fully trust.

If you need Windows backups, I recommend using the built-in Server Backup function on all Server-level Windows products. Again, ensure you have a remote target that is off site and accessible. You’ll want to point your backups to that location, and set it to backup daily for the best redundancy.

File-based backups

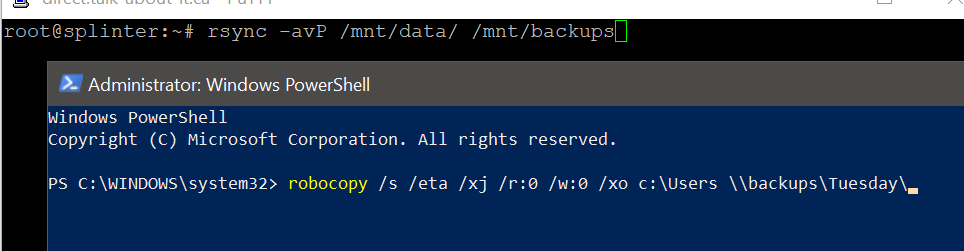

For both Windows and Linux, you can also do manual backups - useful if you’re about to make a large change. Depending on whether you’re backing up Windows or Linux, if you want file-based access to your backed up files, use rsync (Linux, available from Samba repos) or robocopy (Windows Vista and above).

For Linux (replace /sourcewith your source directory; replace /destination with your backup target):rsync -avP /source /destination

Explanation:

-a- Copies files/folders in Archive mode - preserving attributes (i.e. owner info, file permissions, etc)

-v- Displays verbose information

-P- Equal to

--partial --progress. Helps if the transfer may be interrupted part way through.

For Windows (replace source\ with the drive and path of your source; replace destination\ with your backup target):robocopy /s /eta /xj /r:0 /w:0 /xo source\ destination\

Explanation:

/s- Copies sub directories, except empty ones

/eta- Displays the ETA for the current file. Useful if watching, but does use extra resources. You can also make the window smaller to speed it up.

/xj- Excludes Junction Points. Unless you are using the specifically, it’s recommended, especially if copying the

C:\UsersorC:\Windowsfolder. /r:0- Retry 0 times if you can’t read a file. Speeds up backing up, but you need to check the report at the end.

/w:0- Waits 0 seconds to retry when it can’t read a file.

/xo- Excludes older files. Creates the backup as an incremental backup.

Step two - testing

A backup is worth nothing if you cannot restore from it. Whatever method you choose, you need to test that you can access your data when the time is needed!

For Linux systems, make sure you are comfortable either restoring an image, or installing a fresh copy of your distribution, and copying your data back. This is where rbackup and/or rsync would come in very handy. Remember to also backup your samba configuration, if applicable.

For Windows systems, it’s great to be able to use the image-based backups, but they are usually finicky to restore, especially to different hardware. What you can try is to restore it to another computer, then boot an alternative OS to ensure you can access the files properly. You can also use Windows to “mount” the image, and browse it as though it was another drive.

Other Notes

Currently (mid 2018), storage is cheap. You can get 4 TB backup drives for $100 CAD. This is an ideal time to work on getting your backup solution up and running. But for the love of <insert deity here>, test your backups, and keep something off site and up to date!

If you have important data, you need to keep it safe. Whether you have a way to automatically back up your devices (preferred), or you need to manually kick off the backup process, do it! Backups tend to be needed right before you take them, so get started now, while your data is available!

Let’s say you have a system failure that prevents you from accessing your data - not all hope is lost! If the storage devices are OK, then most decent computer shops should be able to retrieve your data. In the event they cannot, you can expect to pay for a data recovery service, and they are not always cheap. Trust me, it’s much cheaper to prepare now and not need it, as opposed to needing it and not having it.